Efficient Test-Time Scaling

via Probing Internal States

of Large Language Models

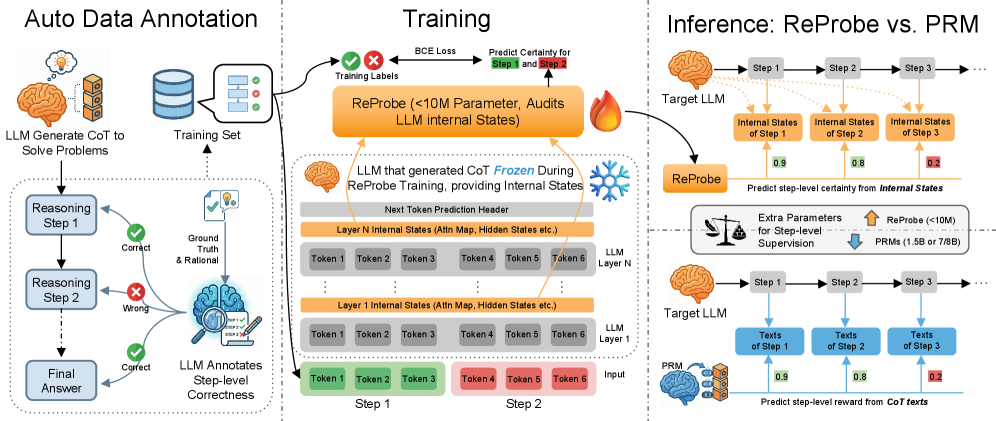

ReProbe replaces bulky Process Reward Models with a <10M-parameter probe that reads directly from the LLM's own hidden states — achieving comparable or better step-level verification at a fraction of the cost.

To load a checkpoint, first set up the target LLM following the GitHub instructions, then attach the probe weights as described in the loading guide.

ReProbe training & inference overview. An annotator LLM labels step correctness on CoT traces (left). ReProbe is trained on internal signals from the frozen target LLM (middle). At inference, the tiny probe (<10M params) replaces a 1.5–8B PRM (right).